Many organizations question the development of data models, although abstract data models have a variety of benefits, but come with some limitations

Many managers, developers, architects, and data modelers confront challenges and benefits of working with an abstract data model and database schemas. Most education on these types of models come from vendors who either sell a product that runs on such a model or provide a service that is dependent on proving the value of an abstract model.

There are many facets to consider when thinking about abstract data models: where they have value, where they present challenges, and some critical success factors when developing and using these artifacts.

Abstract Model Defined

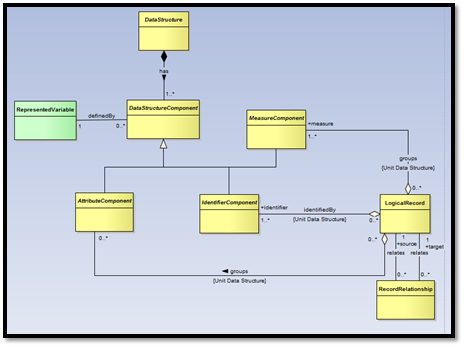

An abstract data modelis a simplified representation of some data and its relationships. A conceptual data model and an abstract data model are similar in that they represent data and are meant to simplify the concepts for users. They differ in that abstract data models tend to be even simpler and have a broader scope than conceptual models. The process of abstracting data is used to combine similar things for higher level classification and grouping. For example, patients, providers, employees, researchers, etc.… are all people. In theory, combining them to all people provides one definition and storage area for all people. The promise of significantly more simplicity can be attractive since it should result in reduced development time and lower costs. While in theory this makes the use of the data easier, the information a corporation wants to capture about different types of people may differ. Thus, there is the challenge of capturing and separating information that is different about patients from providers from employees, etc.…?

The most common sources of abstract models come from 2 main areas:

- Vendors – they are able to preach the promise that one database schema/model can run for any business in any industry.

- Desire to generalize functions – the promise of a Service Oriented Architecture can be leveraged by having abstracted models to handle edits, validations, and retrievals of similar data.

Value of Abstract Data Models

There are several areas where truly well-built abstract model solutions have value, based on the types of solutions they support.

Applications:

The value of an abstract model in applications can have several benefits. Generally, such a model is intended to make it easier import data. A few specific examples of value include:

- Reduction in application development

- Reduction in support and maintenance

- More efficient use of data structures – data is stored once and leveraged many times

- Consistent application of edits and rules across data makes data more consistent – example: all addresses across all entities are handled with the same editing rules.

Analytics:

The value of an abstract model in analytics also appears in several ways, but the details and dependencies seem more significant. Getting data from disparate sources to a level of integration that will support various analytics is probably the single biggest benefit. However, there are many ways to do this that do not require an abstract database. A few specific examples of value related to analytics include:

- Easier integration of data from disparate sources

- Faster construction of data loading jobs – consistent edits and cleansing rules that are reusable.

- Fewer tables in a normalized data structure

- Fewer tables in data marts , making easier support operations

- Fewer tables mean improved design and more efficient data load times and less hardware/infrastructure required to perform the loads.

Challenges of Abstract Data Models

There are several challenges with using abstract data models, based on the types of solutions they support.

Applications:

- While the promise of a vendor having an abstract based solution that allows easy importation of all data is alluring, the fact that most abstract based vended solutions take exponentially more time and money to implement than they demonstrate is a concern. The stories of implementations that take several years are easy to find and easy to validate. Most implementations that end successfully are more about surviving the project. The root cause can be traced to the challenge of mapping and defining specific data to very high-level definitions. This business metadata challenge is magnified in maintenance activities, since it can be extremely difficult to find the high-level abstract concept when the support staff is searching to solve a problem with a specific reference to a physical field.

- Many organizations have used the abstract data models to design their master data management initiatives, but have not performed all the mapping and definition development needed to be successful. MDM will succeed when the abstract data model is supported by complete mapping and business metadata definitions from all subject areas.

Analytics:

- Data marts – Using an abstract data model for a data mart can make navigation difficult for users. It is essential to build all the user insulation into the front end solution, which causes it to become very complex and difficult to support. This is the single biggest compromise and architecture question to be answered – should there be complexity in the data or in the usage? For those organizations having a hard time defining this, choose simplicity for users. However, in the right circumstances, an abstract solution can be the perfect fit.

- While getting data in is easier with an abstract data model solution, getting data out is much more difficult. By following a sound architecture of putting a robust business intelligence / analytics application between the users and the database, a solution based on an abstract data model can be supported by experienced development and database professionals.

- Classifications that are very clearly defined and delineated are great candidates for abstraction. Unfortunately, many areas of data, especially in organizations new to analytics, do not fit these criteria. In this case, building and supporting these classifications becomes a significant challenge.

Critical Success Factors

There are some critical success factors to consider leveraging an abstract model in applications or analytics.

- The level of abstraction provides the single biggest challenge. If the goal is to abstract slightly from where root data would be defined, the impact is minimal. To abstract to the highest level (e.g., the definition of a noun – a person, place, or thing – everything will fit into those 3 categories) the challenges to leverage that data effectively can be an immense challenge unless the team is prepared and experienced.

- Experience. Having senior developers that have not just read about these types of solutions, but have actually worked with them is critical to success. The best source of these employees comes from those that have worked directly in developing vended software or integrators that have to implement packages that they did not develop. In most cases, these resources have had to learn how to work with and maintain these solutions in multiple business clients that have different needs.

- Experience. Data modelers that have built these types of models are nice to have, but if they have not developed solutions that leverage them and are not experienced in how to make them successful, the organization is in serious jeopardy of having a solution that looks good from a model perspective, but is never used by the business. Unfortunately, this is one way that data modelers attain reputations for not delivering to the business.

- One solution to very high level abstraction changes the physical implementation to a hybrid from a logical abstracted model. In this case, the logical data model will contain information that is highly abstracted, but in the physical model, there will be a master person table that has all people in it and a separate table for patients, another for provider, etc.… This allows the database administrator to ensure that any new person that is associated to a specific role will have all the information that is necessary to define that type of person. Yet, they will be linked within the data for each role they perform.

- Consider the target area for the abstract model. An abstract model to run everything in the organization means there must be a large budget and much time to address applying a new model to all existing applications. Adopting a more targeted approach for a specific need will allow the data architecture team to maintain its focus and deliver a level of abstraction that is appropriate for that solution – be it one application or an area of applications or analytical solutions.

Conclusion

There is value in adopting an abstract data model approach to data architecture, especially for developing common concepts and defining them throughout the organization, using them across applications and in analytics.

Abstract data models can be hard to work with and to learn how to implement. With really strong, seasoned resources, effective solutions based on abstract models are possible, but remember the need for maintenance and support and the need to demonstrate business value of the abstract data model.