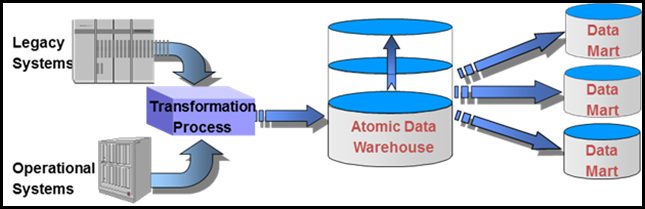

It is important to understand the value of capturing as much detailed root data as possible to enable the development and usage of views and other analytical perspectives when designing business intelligence solutions.

There are many levels of planning and challenges in data warehousing/business intelligence efforts. One specific challenge that has caused many projects to fall into an “analysis paralysis” trap is the detailed definition of all requirements and the metrics used to measure the value of the solution. Requirements definition typically means different things to each person. Yet, requirements are either loosely defined, or buttoned down to the extent they are not flexible.

Development teams may spend months to a year trying to identify all the calculations a business “MIGHT” need. Ideally, requirements should be clear, manageable, and sufficient for the solution to proceed. In data warehouse projects, it may be practical to consider separating data requirements from those of usage needs. One way to look at this is the difference between data and views. When defining and building the data warehouse, think about pulling all of the data related to the chosen subject area.

For example, while gathering data about an order, gather all root data about that order. If this is accomplished successfully, any views or calculations that users want to apply to that data are possible. If designers only deliver exactly what the business asks for, users will be forced to ask for additional related content continually. The more concerning aspect to this is a new design will be needed as new information is requested, because it was not planned for in the original design.

Re-Define Views

It is important to examine the role of views in a data warehouse or business intelligence / analytics solution more closely. If data is the actual information at its root level, then a view is one way to visualize data. For example:

- Derivatives or Calculations – These are simply calculations against data or combinations of fields that result in a new way to look at data.

- Age is a good derivative – It is the calculation of today’s date minus the birthdate. Age at time of clinical procedure is merely taking the date of the procedure and subtracting the birthdate. When thought of this way, it is easy to understand the value of storing the patient’s birthdate to provide the ability to tie it to any date attached to a person’s record.

- How about time of surgery – If the start time and end time are available, it is easy to calculate the total time. The hardest part in this example is not the calculation, rather it is the definition. Is it the time the person was wheeled into the operating room, or is it the time where the anesthesia was administered, etc.… The beauty of capturing all root data is that this challenge can be met easily. Ultimately, to satisfy many different types of users, store all of the appropriate times and then create the calculations that define what any specific user likely will want to measure based on business experience and careful elicitation of requirements.

- Net sales – Typically, this is a calculation, perhaps it is gross sales revenue minus commissions. Gross margin would be a further calculation based on business requirements, with net margin as an additional refinement. Discussions with users in this area should discover a variety of root data that would be valuable for additional views.

- Classifications or Groupings – This type of view would represent grouping of information. In healthcare there is a concept called an “Episode of Care.” Unfortunately, what an episode of care differs on every level – institution to institution, how an Electronic Medical Record (EMR) views an episode of care, potentially across medical practice areas, and most certainly from a billing vs. clinical care perspective. However, if the organization stores the root data about the visits, diagnosis, and other key data, theoretically it is possible for an episode of care to be viewed in different ways depending on the user’s perspective and analytical purpose.

Another classification theme might be a territory distribution. Using the details from order source and related data such as geography and personnel, it can be possible to analyze the results from a variety of perspectives. Most likely, there are many ways to adopt classifications and groups for any organization.

- Timescales – It can be invaluable to see analytical information spread across time. This could be used to reference the events of a day (flows of customers through facilities – by hour, day of the week, etc.…). In addition, it could be used to reference customer flow or volumes over the course of the year. One healthcare quality metric that is not easily measured is the number of quality errors that occur the day after the Super Bowl or the day after the interns start. While these may sound trivial, if access to this information, it would be possible to identify some specific areas of business that could be improved, for competitive advantage and more.

Data Maturity and Data Analytics

Most Business Intelligence or Data Warehousing efforts put a tremendous amount of focus into the specifics of business derivatives and views. In industries where the data is well captured and understood, this is a great approach. In those cases where corporate data is in some disarray, where there is a lack of enterprise definitions or terminology, or where much of the data is not captured, that same approach can be fruitless. Analytical solutions that don’t contain the data the business wants, and therefore must be re-designed, are rarely the fault of any tool or technology, but directly related to the capture and storage of the root data.

Generally, business intelligence and analytics should be as putting the pretty face on the front of a well-architected, terminology consistent, sound data warehouse. This is how understood the organization can realize the benefits of building 60 – 120 day solutions that all of the experts preach is standard. These estimates do not include the examination of the root data’s fitness for use, the maturity of the data for serving the business intelligence or analytical purposes. If the data is not “fit for use,” the 60- 120 day estimates are woefully inadequate.

Take the time to capture that root data and clearly define it (storing meta data for users to leverage) so the fun part of data warehousing – business intelligence can begin. Yes, actually working with the business to analyze data and develop new derivatives, calculations, classifications, timescales, and views is downright fun. As the business better understands its identity and analyzing results becomes a way of life, both business and the corresponding IT resources quickly grow. The complexity of the tools grows, the business strategy is realigned, and pockets of expertise are in open view.

Conclusion

In an industry where the data is not leveraged well for analysis, it is nearly impossible to come up with all of the views of data that will benefit the company at each turHowever, it is best to capture the data at its root level and work with the business to test out new metrics and views. While this sounds like skirting requirements, it actually is a paradigm shift. It is defining the high level and root data requirements at the start of the initiative, and defining the detailed calculations and classifications once the root data is in place and tested.

With the power and beauty of the BI tools available in the market place, it is much less important which tool is used. Rather, it is essential that the root data is captured sufficiently to be available for a wide variety of views and uses. Tools for reporting, analysis, mining, visualization, and other methods of exploration are all relatively easy to use in calculations/derivatives/views.